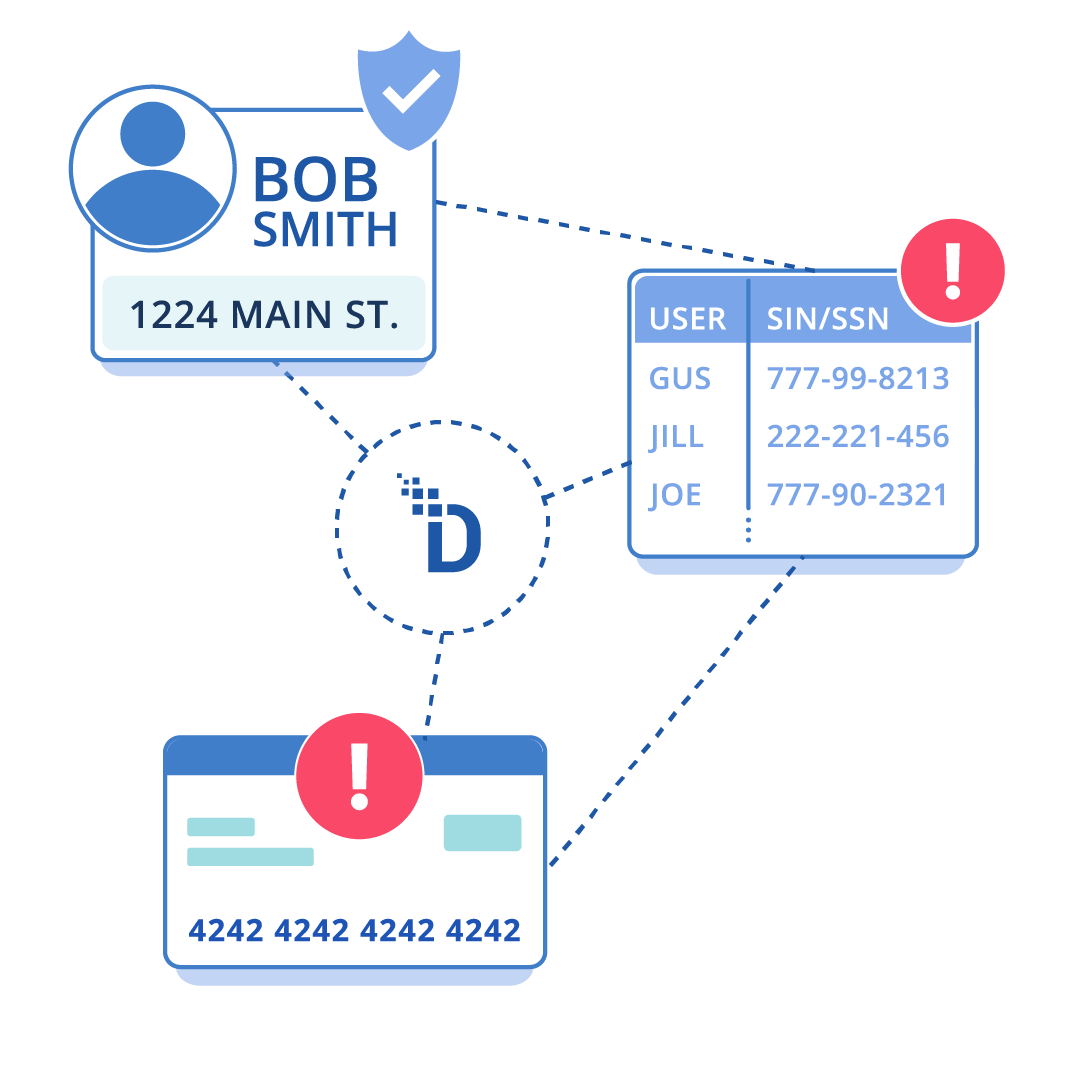

Customer data, personal information, and regulated fields like social security numbers, protected health information, and the primary account number (PAN) are copied across systems, logs, cloud analytics, and third parties – turning a single mistake into a reportable data breach and escalating breach costs.

Tokenization is how enterprises neutralize that risk by ensuring lower-value systems never see the original data.

DataStealth delivers data tokenization solutions that replace sensitive data with tokenized data (tokens) before it lands in databases, apps, files, or cloud services.

Because tokens have no exploitable meaning outside the tokenization system, they reduce the blast radius of compromise and trim compliance scope.

More secure data, simpler compliance, and a cost-effective tokenization solution that protects high-volume systems without adding the latency and refactoring tax that kills projects.

4.8/5 rating on G2 and other review platforms for data-centric security and ease of deployment.

Named a top data security platform for giving organizations visibility into shadow IT and high-risk data.

DataStealth is recognized in Forrester’s Data Security Platform Landscape Report and trusted by highly regulated organizations that cannot afford data exposure or downtime.

No Refactoring, No Performance Hit

Tokenization should be simple in theory: replace sensitive fields with valueless tokens. In enterprise reality, tokenization fails for predictable reasons:

DataStealth is built to avoid those traps.

We tokenize at the data layer so you can protect PANs, PII, and PHI across hybrid estates without rewriting apps.

BOOK A DEMO TODAYWe support the token types and controls enterprises demand – format preservation for legacy compatibility, deterministic behavior for analytics, strict access controls for detokenization, and key control models like BYOK/HYOK, security improves without operational drag.

Tokenize primary account numbers (PAN) before storage; downstream systems only process tokens, reducing exposure and simplifying compliance obligations under PCI DSS.

Use deterministic tokenized identifiers so teams can join and analyze data in cloud platforms without exposing personal information or customer identifiers to broader access.

Replace sensitive fields before data is replicated to dev/test environments or shared with vendors. Pair with data masking where appropriate, teams work with realistic values without touching the original data.

Detect regulated data elements (PAN, SSN, PHI) across structured flows and payloads – so protection policies apply consistently.

Built for high-throughput environments so that data tokenization doesn’t become the bottleneck in payments, apps, or data flows.

Log access and policy events so security can review who accessed what and when – supporting audit readiness and incident response.

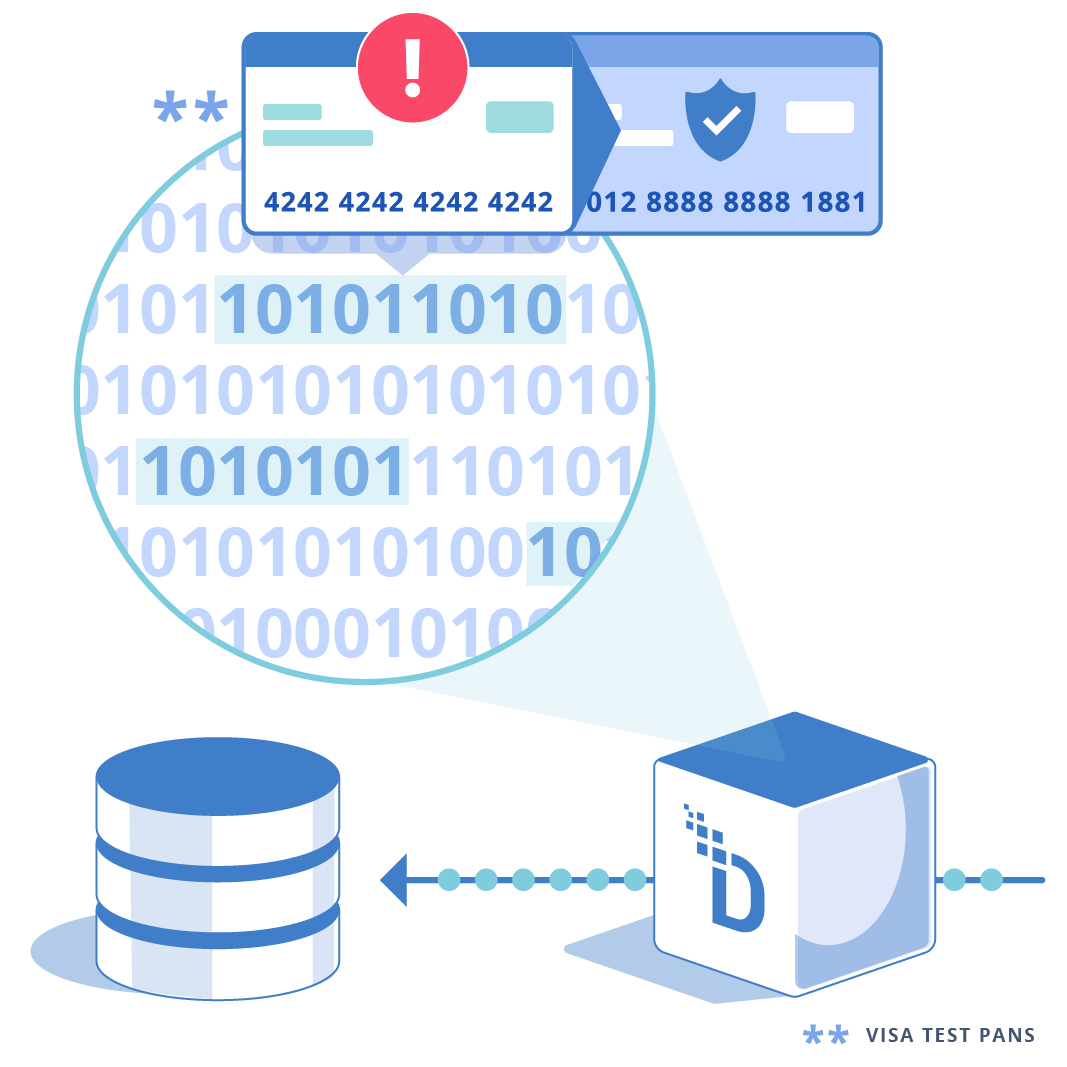

Tokenization replaces sensitive data with non-sensitive tokens that have no exploitable value outside the tokenization system.

Only authorized users/systems can retrieve PAN or other sensitive values from tokens; everything else uses tokenized data. PCI guidance emphasizes restricting PAN retrieval and protecting systems that can retrieve PAN.

Tokens retain the length, character set, and structure of the original value, so legacy schemas, validators, and downstream applications continue to work without disruption or breakage.

Keep tokens compatible with legacy schemas and validators – so you don’t refactor databases just to secure them.

Deterministic tokenization for joins/analytics; randomized for maximum privacy – choose per field and use case.

Keep sovereignty over keys (BYOK/HYOK) and integrate with enterprise key management so security owns the root of trust.

Support regional controls so tokens/keys stay where regulations require.

Tokenize PAN and broader PII/PHI (emails, IDs, SSNs, identifiers) – consolidate controls instead of stacking vendors.

A data tokenization solution is a cybersecurity system that substitutes sensitive data elements, such as credit card numbers or social security numbers, with non-sensitive equivalents called tokens. These tokens serve as references to the original data but have no intrinsic value or meaning on their own.

The primary purpose is to allow business systems to process and store the token without exposing the actual sensitive information to theft or unauthorized access. If a breach occurs, the attacker obtains only useless tokens, rendering the exfiltration of data unsuccessful.

Data tokenization works by intercepting sensitive data at an ingestion point and replacing it with a randomly generated or algorithmically derived token.

The relationship between the token and the original data is maintained securely, often in a hardened token vault.

Format-preserving tokenization ensures the token matches the structure of the original data (e.g., length and character set). When a legitimate business process requires the original data, the system performs a "detokenization" process, swapping the token back for the cleartext value for authorized users only.

Tokenization is often considered more secure than encryption for data storage because it removes the sensitive data from the environment entirely.

Encryption obscures data using a mathematical formula and a key; if an attacker steals the encrypted data and the key, they can reverse the process to reveal the original information. In contrast, tokens often have no mathematical relationship to the original data.

A stolen token cannot be reversed into cleartext without access to the centralized token vault or the specific secure tokenization engine, significantly reducing the blast radius of a potential breach.

While originally popularized for credit card numbers (PANs) in the payment industry, modern data tokenization solutions can protect virtually any type of sensitive information.

This includes Personally Identifiable Information (PII), such as names, email addresses, and Social Security numbers; Protected Health Information (PHI), such as medical record numbers and patient diagnoses; and other confidential business data.

Advanced solutions like DataStealth can also tokenize unstructured data found within documents, images, and log files, ensuring comprehensive protection across the enterprise.

The primary difference between tokenization and data masking lies in reversibility and use case.

Tokenization is designed to be reversible; the token maps back to the original data so that authorized business processes (like processing a refund) can still occur.

Vault-based tokenization stores the relationship between sensitive data and tokens in a centralized database (the vault). This allows for random token generation.

Vaultless tokenization uses secure cryptographic algorithms to generate tokens on the fly without storing the mapping in a database.

For PCI DSS compliance, tokenization is generally superior to encryption for reducing audit scope. According to the PCI Security Standards Council, encrypted data is still considered "cardholder data," and the systems storing it remain in scope for audits because the data is merely hidden, not removed.

Tokenization replaces the data entirely. If the original PAN is not present in the system, the system is often eligible for removal from the Cardholder Data Environment (CDE) scope.

Therefore, tokenization offers greater cost savings and operational simplification for PCI compliance strategies.

Implementing data tokenization without rewriting applications requires a solution that operates at the network or proxy layer, such as DataStealth.

Instead of modifying application code to make API calls to a tokenization server, a proxy-based solution sits in the network traffic path between the application and the database. It inspects traffic in real-time, automatically identifying and tokenizing sensitive fields before they are written to disk.

This allows enterprises to immediately secure legacy mainframes and commercial-off-the-shelf (COTS) software with zero code changes and minimal disruption.

Well-architected data tokenization solutions introduce negligible latency, typically measured in microseconds or low single-digit milliseconds.

Solutions like DataStealth use high-performance, stateless processing architectures to perform tokenization at wire speed.

By utilizing in-memory processing and optimizing cryptographic operations, these platforms ensure that even high-frequency trading applications or real-time payment gateways maintain their required throughput levels. Network-based approaches often outperform API-based calls, which can suffer from network round-trip delays and overhead.

Yes, tokenization is a recognized method of pseudonymization under the GDPR and helps meet privacy requirements under CCPA/CPRA.

By replacing direct identifiers (such as names and email addresses) with tokens, organizations separate the data subject's identity from their transaction history.

In the event of a breach, the exposure of tokenized data is unlikely to result in a "high risk to the rights and freedoms of natural persons," potentially removing the requirement to notify regulators or affected individuals.

Furthermore, deleting the token mapping can effectively achieve the "Right to be Forgotten" across all backups instantly.

Yes, DataStealth is a certified PCI DSS Level 1 Service Provider. This certification indicates that the platform has undergone rigorous third-party auditing to verify that its security controls, policies, and procedures meet the Payment Card Industry's highest standards.

For enterprise customers, using a certified provider is critical because it ensures that the tokenization process itself introduces no new risks to the environment.

It enables merchants and service providers to rely on DataStealth’s attestation of compliance (AoC) to satisfy their own auditor requirements.

Tokenization is an effective strategy for meeting HIPAA Security Rule requirements for protecting electronic Protected Health Information (ePHI).

By de-identifying patient data within databases, analytics platforms, and non-production environments, organizations significantly reduce the risk of HIPAA violations.

Because tokenized data is no longer considered PHI (provided the method for re-identification is secured), it allows healthcare organizations to share datasets for research or operational analysis without violating patient privacy or requiring complex business associate agreements.

When evaluating enterprise data tokenization solutions, prioritize architecture, scalability, and ease of integration.

Look for a solution that supports Format-Preserving Tokenization (FPT) to prevent application breakage. Ensure the platform offers high availability and disaster recovery capabilities to match your uptime SLAs.

Critically, evaluate the integration method; solutions that require no code changes (proxy-based) offer faster time-to-value than SDK-heavy options.

Finally, verify that the vendor supports a wide range of data types (PCI, PII, PHI) and environments (Mainframe, Cloud, Hybrid) to avoid vendor sprawl.

Yes, robust tokenization solutions enable secure data analytics in cloud data warehouses such as Snowflake, AWS Redshift, and Google BigQuery.

The best practice is to tokenize sensitive data before uploading it to the cloud. By using deterministic tokens – where the same cleartext value always yields the same token – organizations can perform SQL joins, aggregations, and filtering on the tokenized data in the cloud warehouse. This enables powerful analytics without ever exposing raw PII or PCI data to the cloud provider, resolving data sovereignty and residency concerns.

Tokenization can impact database search operations depending on the token type used. If deterministic tokenization is applied, exact match searches remain fully functional because the search term can be tokenized and matched against the stored tokens.

However, range searches (e.g., "find values greater than X") or partial string searches (e.g., "starts with 4111") may not work natively on tokenized data since the tokens do not preserve the numeric or alphabetic order of the original data.

Enterprises must evaluate their query patterns and select a solution that offers specialized indexing or detokenization-for-search capabilities, as needed.

DataStealth differentiates itself through its transparent proxy architecture.

Unlike competitors that require installing agents on every server or rewriting application code to use proprietary APIs, DataStealth sits at the network layer. It acts as a bridge between users/applications and the data stores.

This allows DataStealth to inspect traffic, identify sensitive data via policy, and tokenize it in real-time without the application or database being aware that the data has changed.

This approach eliminates the heavy lifting of code refactoring, enabling rapid deployment in complex, legacy, and hybrid environments.

Yes, DataStealth is specifically engineered to support custom-built legacy applications and mainframe environments (IBM z/OS, AS/400).

Because it operates on standard network protocols (TCP/IP, HTTP, TN3270, SQL), it allows these rigid systems to be secured without modification.

Organizations can tokenize data entering a mainframe DB2 database or a custom monolithic application by simply routing traffic through the DataStealth appliance.

This capability extends the life of legacy investments while ensuring they meet modern compliance standards, such as PCI DSS v4.0.